Simple feedforward network with contiguous parameter storage.

More...

#include <network.hpp>

|

| | Network ()=default |

| |

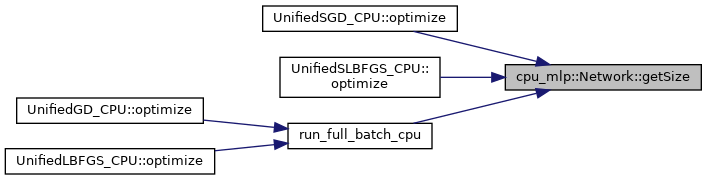

| size_t | getSize () const |

| | Total number of parameters. More...

|

| |

| template<int In, int Out, typename Activation = Linear> |

| void | addLayer () |

| | Append a dense layer to the network. More...

|

| |

| void | bindParams (unsigned int seed=kDefaultSeed) |

| | Bind and initialize parameters and gradient buffers. More...

|

| |

| const Eigen::MatrixXd & | forward (const Eigen::MatrixXd &input) |

| | Run a forward pass for a batch of inputs. More...

|

| |

| void | backward (const Eigen::MatrixXd &loss_grad) |

| | Run a backward pass from output loss gradients. More...

|

| |

| void | zeroGrads () |

| | Zero the gradient buffer. More...

|

| |

| double * | getParamsData () |

| | Access raw parameter buffer. More...

|

| |

| double * | getGradsData () |

| | Access raw gradient buffer. More...

|

| |

| void | setParams (const Eigen::VectorXd &new_params) |

| | Replace parameters with a new vector. More...

|

| |

| void | getGrads (Eigen::VectorXd &out_grads) |

| | Copy gradients to output vector. More...

|

| |

| void | test (const Eigen::MatrixXd &inputs, const Eigen::MatrixXd &targets, std::string label="Test Results") |

| | Evaluate accuracy and MSE for a dataset. More...

|

| |

Simple feedforward network with contiguous parameter storage.

◆ Network()

| cpu_mlp::Network::Network |

( |

| ) |

|

|

default |

◆ addLayer()

template<int In, int Out, typename Activation = Linear>

| void cpu_mlp::Network::addLayer |

( |

| ) |

|

|

inline |

Append a dense layer to the network.

◆ backward()

| void cpu_mlp::Network::backward |

( |

const Eigen::MatrixXd & |

loss_grad | ) |

|

|

inline |

Run a backward pass from output loss gradients.

◆ bindParams()

| void cpu_mlp::Network::bindParams |

( |

unsigned int |

seed = kDefaultSeed | ) |

|

|

inline |

Bind and initialize parameters and gradient buffers.

◆ forward()

| const Eigen::MatrixXd& cpu_mlp::Network::forward |

( |

const Eigen::MatrixXd & |

input | ) |

|

|

inline |

Run a forward pass for a batch of inputs.

◆ getGrads()

| void cpu_mlp::Network::getGrads |

( |

Eigen::VectorXd & |

out_grads | ) |

|

|

inline |

Copy gradients to output vector.

◆ getGradsData()

| double* cpu_mlp::Network::getGradsData |

( |

| ) |

|

|

inline |

Access raw gradient buffer.

◆ getParamsData()

| double* cpu_mlp::Network::getParamsData |

( |

| ) |

|

|

inline |

Access raw parameter buffer.

◆ getSize()

| size_t cpu_mlp::Network::getSize |

( |

| ) |

const |

|

inline |

Total number of parameters.

◆ setParams()

| void cpu_mlp::Network::setParams |

( |

const Eigen::VectorXd & |

new_params | ) |

|

|

inline |

Replace parameters with a new vector.

◆ test()

| void cpu_mlp::Network::test |

( |

const Eigen::MatrixXd & |

inputs, |

|

|

const Eigen::MatrixXd & |

targets, |

|

|

std::string |

label = "Test Results" |

|

) |

| |

|

inline |

Evaluate accuracy and MSE for a dataset.

◆ zeroGrads()

| void cpu_mlp::Network::zeroGrads |

( |

| ) |

|

|

inline |

Zero the gradient buffer.

The documentation for this class was generated from the following file: