Feed-forward dense network with GPU-backed parameters and gradients. More...

Public Member Functions | |

| CudaNetwork (CublasHandle &handle) | |

| Construct a network tied to a cuBLAS handle. More... | |

| void | addLayer (int in, int out, ActivationType act) |

| Append a layer definition. More... | |

| void | bindParams (unsigned int seed=kDefaultSeed) |

| Allocate parameter/gradient buffers and initialize weights. More... | |

| size_t | params_size () const |

| Total number of parameters. More... | |

| int | output_size () const |

| Output dimension of the last layer. More... | |

| CudaScalar * | params_data () |

| Mutable device pointer to parameters. More... | |

| CudaScalar * | grads_data () |

| Mutable device pointer to gradients. More... | |

| void | zeroGrads () |

| Zero all gradients. More... | |

| void | forward_only (const CudaScalar *input, int batch) |

| Forward pass only (no gradient computation) More... | |

| CudaScalar | compute_loss_and_grad (const CudaScalar *input, const CudaScalar *target, int batch) |

| Compute MSE loss and gradients for a batch. More... | |

| void | copy_output_to_host (CudaScalar *host, size_t n) const |

| Copy the latest output activations to host memory. More... | |

| int | last_batch () const |

| Batch size used in the last forward pass. More... | |

Detailed Description

Feed-forward dense network with GPU-backed parameters and gradients.

Constructor & Destructor Documentation

◆ CudaNetwork()

|

inlineexplicit |

Construct a network tied to a cuBLAS handle.

Member Function Documentation

◆ addLayer()

|

inline |

Append a layer definition.

- Parameters

-

in Input dimension out Output dimension act Activation function

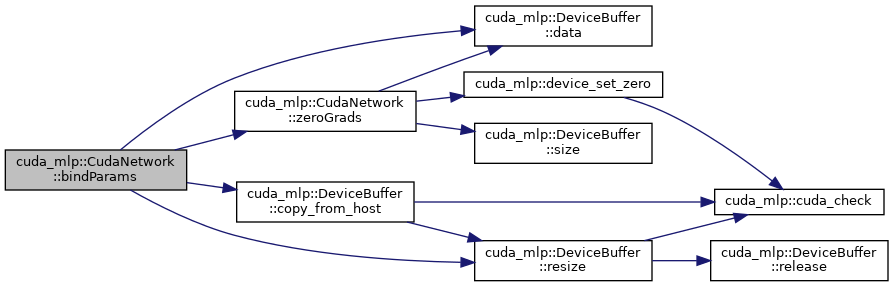

◆ bindParams()

|

inline |

Allocate parameter/gradient buffers and initialize weights.

- Parameters

-

seed RNG seed for weight initialization

Here is the call graph for this function:

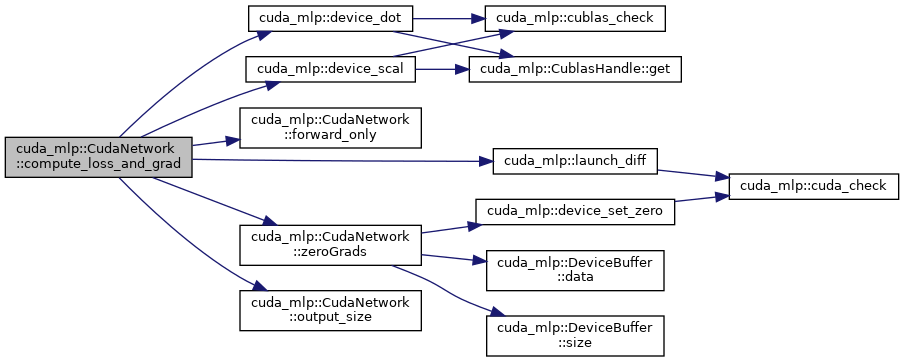

◆ compute_loss_and_grad()

|

inline |

Compute MSE loss and gradients for a batch.

- Parameters

-

input Input batch (in x batch) target Target batch (out x batch) batch Batch size

- Returns

- Mean squared error loss

Here is the call graph for this function:

◆ copy_output_to_host()

|

inline |

Copy the latest output activations to host memory.

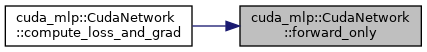

◆ forward_only()

|

inline |

Forward pass only (no gradient computation)

- Parameters

-

input Input batch (in x batch) batch Batch size

Here is the caller graph for this function:

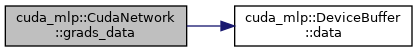

◆ grads_data()

|

inline |

Mutable device pointer to gradients.

Here is the call graph for this function:

◆ last_batch()

|

inline |

Batch size used in the last forward pass.

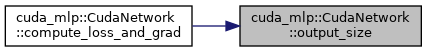

◆ output_size()

|

inline |

Output dimension of the last layer.

Here is the caller graph for this function:

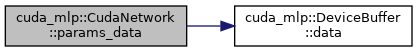

◆ params_data()

|

inline |

Mutable device pointer to parameters.

Here is the call graph for this function:

◆ params_size()

|

inline |

Total number of parameters.

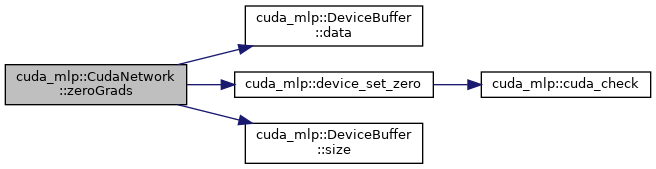

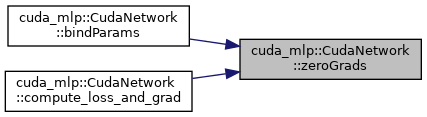

◆ zeroGrads()

|

inline |

Zero all gradients.

Here is the call graph for this function:

Here is the caller graph for this function:

The documentation for this class was generated from the following file:

- src/cuda/network.cuh