Classes | |

| class | CublasHandle |

| RAII-managed cuBLAS handle. More... | |

| class | DeviceBuffer |

| Owning buffer for device memory. More... | |

| class | CudaGD |

| Gradient descent with optional momentum. More... | |

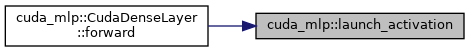

| class | CudaDenseLayer |

| Fully connected layer with activation, using column-major matrices. More... | |

| class | CudaLBFGS |

| Limited-memory BFGS with Armijo backtracking line search. More... | |

| class | CudaMinimizerBase |

| Abstract base class for CUDA-based minimizers. More... | |

| class | CudaNetwork |

| Feed-forward dense network with GPU-backed parameters and gradients. More... | |

| class | CudaSGD |

| SGD with optional momentum and learning-rate decay. More... | |

Typedefs | |

| using | CudaScalar = float |

| Scalar type used across CUDA kernels and optimizers. More... | |

Enumerations | |

| enum class | ActivationType : int { Linear = 0 , Tanh = 1 , ReLU = 2 , Sigmoid = 3 } |

| Supported activation functions. More... | |

Functions | |

| void | cuda_check (cudaError_t err, const char *msg) |

| Check a CUDA API call and abort with a message on failure. More... | |

| void | cublas_check (cublasStatus_t status, const char *msg) |

| Check a cuBLAS API call and abort with a message on failure. More... | |

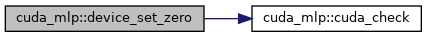

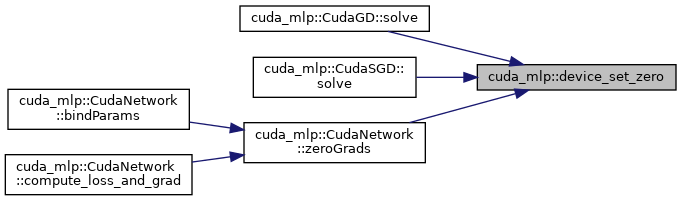

| void | device_set_zero (CudaScalar *ptr, size_t n) |

| Set device memory to zero. More... | |

| void | device_copy (CudaScalar *dst, const CudaScalar *src, size_t n) |

| Copy device-to-device. More... | |

| CudaScalar | device_dot (CublasHandle &handle, const CudaScalar *x, const CudaScalar *y, int n) |

| Compute dot product on device using cuBLAS. More... | |

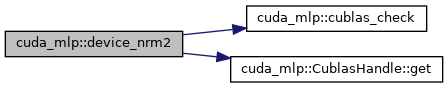

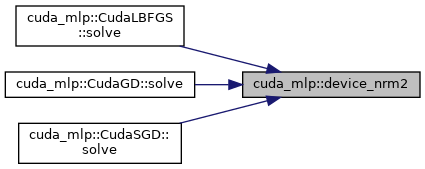

| CudaScalar | device_nrm2 (CublasHandle &handle, const CudaScalar *x, int n) |

| Compute Euclidean norm on device using cuBLAS. More... | |

| void | device_axpy (CublasHandle &handle, int n, CudaScalar alpha, const CudaScalar *x, CudaScalar *y) |

| y <- alpha * x + y (AXPY) on device using cuBLAS. More... | |

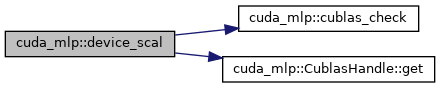

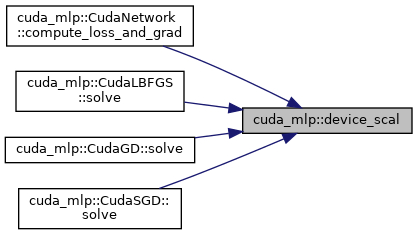

| void | device_scal (CublasHandle &handle, int n, CudaScalar alpha, CudaScalar *x) |

| Scale vector x <- alpha * x on device using cuBLAS. More... | |

| CudaScalar | activation_scale (ActivationType act) |

| scaling factor for initialization. More... | |

| __global__ void | add_bias_kernel (CudaScalar *z, const CudaScalar *b, int rows, int cols) |

| Kernel: add bias vector to column-major matrix. More... | |

| __global__ void | activation_kernel (CudaScalar *a, int n, int act) |

| Kernel: apply activation in-place. More... | |

| __global__ void | activation_deriv_kernel (CudaScalar *grad, const CudaScalar *a, int n, int act) |

| Kernel: multiply gradient by activation derivative. More... | |

| __global__ void | diff_kernel (const CudaScalar *output, const CudaScalar *target, CudaScalar *diff, int n) |

| Kernel: diff = output - target. More... | |

| __global__ void | sum_rows_kernel (const CudaScalar *mat, CudaScalar *out, int rows, int cols) |

| Kernel: sum columns (rows x cols) into a row vector. More... | |

| void | launch_add_bias (CudaScalar *z, const CudaScalar *b, int rows, int cols) |

| Launch add-bias kernel. More... | |

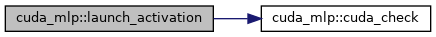

| void | launch_activation (CudaScalar *a, int n, ActivationType act) |

| Launch activation kernel. More... | |

| void | launch_activation_deriv (CudaScalar *grad, const CudaScalar *a, int n, ActivationType act) |

| Launch activation-derivative kernel. More... | |

| void | launch_diff (const CudaScalar *output, const CudaScalar *target, CudaScalar *diff, int n) |

| Launch diff kernel. More... | |

| void | launch_sum_rows (const CudaScalar *mat, CudaScalar *out, int rows, int cols) |

| Launch sum-rows kernel. More... | |

Typedef Documentation

◆ CudaScalar

| using cuda_mlp::CudaScalar = typedef float |

Scalar type used across CUDA kernels and optimizers.

Enumeration Type Documentation

◆ ActivationType

|

strong |

Function Documentation

◆ activation_deriv_kernel()

| __global__ void cuda_mlp::activation_deriv_kernel | ( | CudaScalar * | grad, |

| const CudaScalar * | a, | ||

| int | n, | ||

| int | act | ||

| ) |

Kernel: multiply gradient by activation derivative.

◆ activation_kernel()

| __global__ void cuda_mlp::activation_kernel | ( | CudaScalar * | a, |

| int | n, | ||

| int | act | ||

| ) |

Kernel: apply activation in-place.

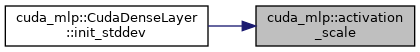

◆ activation_scale()

|

inline |

scaling factor for initialization.

◆ add_bias_kernel()

| __global__ void cuda_mlp::add_bias_kernel | ( | CudaScalar * | z, |

| const CudaScalar * | b, | ||

| int | rows, | ||

| int | cols | ||

| ) |

Kernel: add bias vector to column-major matrix.

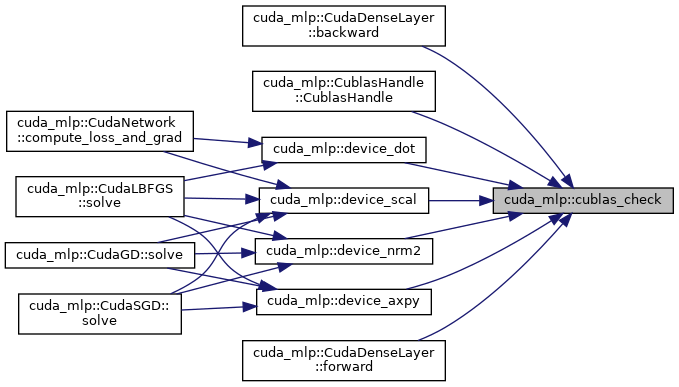

◆ cublas_check()

|

inline |

Check a cuBLAS API call and abort with a message on failure.

- Parameters

-

status cuBLAS status code. msg Context string describing the operation.

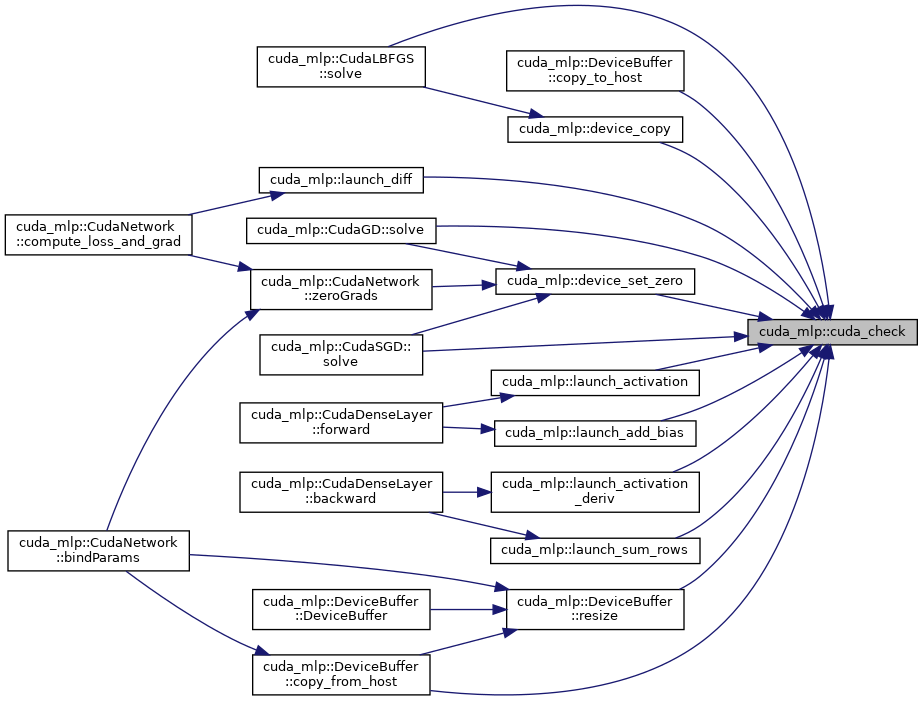

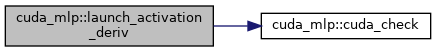

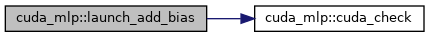

◆ cuda_check()

|

inline |

Check a CUDA API call and abort with a message on failure.

- Parameters

-

err CUDA error code returned by the runtime msg Context string describing the operation

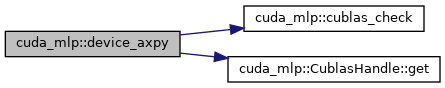

◆ device_axpy()

|

inline |

y <- alpha * x + y (AXPY) on device using cuBLAS.

◆ device_copy()

|

inline |

Copy device-to-device.

- Parameters

-

dst Destination device pointer. src Source device pointer. n Number of elements.

◆ device_dot()

|

inline |

Compute dot product on device using cuBLAS.

◆ device_nrm2()

|

inline |

Compute Euclidean norm on device using cuBLAS.

◆ device_scal()

|

inline |

Scale vector x <- alpha * x on device using cuBLAS.

◆ device_set_zero()

|

inline |

Set device memory to zero.

- Parameters

-

ptr Device pointer. n Number of elements.

◆ diff_kernel()

| __global__ void cuda_mlp::diff_kernel | ( | const CudaScalar * | output, |

| const CudaScalar * | target, | ||

| CudaScalar * | diff, | ||

| int | n | ||

| ) |

Kernel: diff = output - target.

◆ launch_activation()

|

inline |

Launch activation kernel.

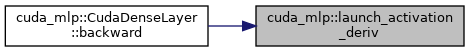

◆ launch_activation_deriv()

|

inline |

Launch activation-derivative kernel.

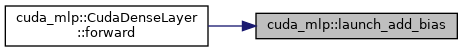

◆ launch_add_bias()

|

inline |

Launch add-bias kernel.

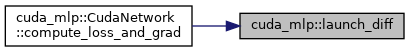

◆ launch_diff()

|

inline |

Launch diff kernel.

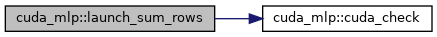

◆ launch_sum_rows()

|

inline |

Launch sum-rows kernel.

◆ sum_rows_kernel()

| __global__ void cuda_mlp::sum_rows_kernel | ( | const CudaScalar * | mat, |

| CudaScalar * | out, | ||

| int | rows, | ||

| int | cols | ||

| ) |

Kernel: sum columns (rows x cols) into a row vector.